TiDB Memory Control

Currently, TiDB can track the memory quota of a single SQL query and take actions to prevent OOM (out of memory) or troubleshoot OOM when the memory usage exceeds a specific threshold value. The system variable tidb_mem_oom_action specifies the action to take when a query reaches the memory limit:

- A value of

LOGmeans that queries will continue to execute when thetidb_mem_quota_querylimit is reached, but TiDB will print an entry to the log. - A value of

CANCELmeans TiDB stops executing the SQL query immediately after thetidb_mem_quota_querylimit is reached, and returns an error to the client. The error information clearly shows the memory usage of each physical execution operator that consumes memory in the SQL execution process.

Configure the memory quota of a query

The system variable tidb_mem_quota_query sets the limit for a query in bytes. Some usage examples:

-- Set the threshold value of memory quota for a single SQL query to 8GB:

SET tidb_mem_quota_query = 8 << 30;

-- Set the threshold value of memory quota for a single SQL query to 8MB:

SET tidb_mem_quota_query = 8 << 20;

-- Set the threshold value of memory quota for a single SQL query to 8KB:

SET tidb_mem_quota_query = 8 << 10;

Configure the memory usage threshold of a tidb-server instance

Since v6.5.0, you can use the system variable tidb_server_memory_limit to set the threshold for the memory usage of a tidb-server instance.

For example, set the total memory usage of a tidb-server instance to 32 GB:

SET GLOBAL tidb_server_memory_limit = "32GB";

After you set this variable, when the memory usage of a tidb-server instance reaches 32 GB, TiDB will terminate the SQL operation with the largest memory usage among all running SQL operations in order, until the memory usage of the instance drops below 32 GB. The forcibly terminated SQL operation will return the Out Of Memory Quota! error to the client.

Currently, the memory limit set by tidb_server_memory_limit DOES NOT terminate the following SQL operations:

- DDL operations

- SQL operations that contain window functions and common table expressions

When the memory usage of a tidb-server instance reaches a certain proportion of the total memory (the proportion is controlled by the system variable tidb_server_memory_limit_gc_trigger), tidb-server will try to trigger a Golang GC to relieve memory stress. To avoid frequent GCs that cause performance issues due to the instance memory fluctuating around the threshold, this GC method will trigger GC at most once every minute.

View the memory usage of the current tidb-server instance using the INFORMATION_SCHEMA system table

To view the memory usage of the current instance or cluster, you can query the system table INFORMATION_SCHEMA.(CLUSTER_)MEMORY_USAGE.

To view the memory-related operations and execution basis of the current instance or cluster, you can query the system table INFORMATION_SCHEMA.(CLUSTER_)MEMORY_USAGE_OPS_HISTORY. For each instance, this table retains the latest 50 records.

Trigger the alarm of excessive memory usage

When the memory usage of a tidb-server instance exceeds its memory threshold (70% of its total memory by default) and any of the following conditions is met, TiDB records the related status files and prints an alarm log.

- It is the first time the memory usage exceeds the memory threshold.

- The memory usage exceeds the memory threshold and it has been more than 60 seconds since the last alarm.

- The memory usage exceeds the memory threshold and

(Current memory usage - Memory usage at the last alarm) / Total memory > 10%.

You can control the memory threshold that triggers the alarm by modifying the memory usage ratio via the system variable tidb_memory_usage_alarm_ratio.

When the alarm of excessive memory usage is triggered, TiDB takes the following actions:

TiDB records the following information in the directory where the TiDB log file

filenameis located.- The information about the top 10 SQL statements with the highest memory usage and the top 10 SQL statements with the longest running time among all SQL statements currently being executed

- The goroutine stack information

- The usage status of heap memory

TiDB prints an alarm log containing the keyword

tidb-server has the risk of OOMand the values of the following memory-related system variables.

To avoid accumulating too many status files for alarms, TiDB only retains the status files generated during the recent five alarms by default. You can adjust this number by configuring the system variable tidb_memory_usage_alarm_keep_record_num.

The following example constructs a memory-intensive SQL statement that triggers the alarm:

Set

tidb_memory_usage_alarm_ratioto0.85:SET GLOBAL tidb_memory_usage_alarm_ratio = 0.85;Execute

CREATE TABLE t(a int);and insert 1000 rows of data.Execute

select * from t t1 join t t2 join t t3 order by t1.a. This SQL statement outputs one billion records, which consumes a large amount of memory and therefore triggers the alarm.Check the

tidb.logfile which records the total system memory, current system memory usage, memory usage of the tidb-server instance, and the directory of status files.[2022/10/11 16:39:02.281 +08:00] [WARN] [memoryusagealarm.go:212] ["tidb-server has the risk of OOM because of memory usage exceeds alarm ratio. Running SQLs and heap profile will be recorded in record path"] ["is tidb_server_memory_limit set"=false] ["system memory total"=33682427904] ["system memory usage"=22120655360] ["tidb-server memory usage"=21468556992] [memory-usage-alarm-ratio=0.85] ["record path"=/tiup/deploy/tidb-4000/log/oom_record]The fields of the example log file above are described as follows:

is tidb_server_memory_limit setindicates whethertidb_server_memory_limitis set.system memory totalindicates the total memory of the current system.system memory usageindicates the current system memory usage.tidb-server memory usageindicates the memory usage of the tidb-server instance.memory-usage-alarm-ratioindicates the value of the system variabletidb_memory_usage_alarm_ratio.record pathindicates the directory of status files.

By checking the directory of status files (In the preceding example, the directory is

/tiup/deploy/tidb-4000/log/oom_record), you can see a record directory with the corresponding timestamp (for example,record2022-10-09T17:18:38+08:00). The record directory includes three files:goroutinue,heap, andrunning_sql. These three files are suffixed with the time when status files are logged. They respectively record goroutine stack information, the usage status of heap memory, and the running SQL information when the alarm is triggered. For the content inrunning_sql, refer toexpensive-queries.

Reduce the memory usage for write transactions in tidb-server

The transaction model used by TiDB requires that all write operations of transactions are first cached in memory before being committed. When TiDB writes large transactions, memory usage might increase and become a bottleneck. To reduce or avoid high memory usage by large transactions under various constraints, you can adjust the tidb_dml_type system variable to "bulk" or use Non-transactional DML statements.

Other memory control behaviors of tidb-server

Flow control

TiDB supports dynamic memory control for the operator that reads data. By default, this operator uses the maximum number of threads that

tidb_distsql_scan_concurrencyallows to read data. When the memory usage of a single SQL execution exceedstidb_mem_quota_queryeach time, the operator that reads data stops one thread.This flow control behavior is controlled by the system variable

tidb_enable_rate_limit_action.When the flow control behavior is triggered, TiDB outputs a log containing the keywords

memory exceeds quota, destroy one token now.

Disk spill

TiDB supports disk spill for execution operators. When the memory usage of a SQL execution exceeds the memory quota, tidb-server can spill the intermediate data of execution operators to the disk to relieve memory pressure. Operators supporting disk spill include Sort, MergeJoin, HashJoin, and HashAgg.

- The disk spill behavior is jointly controlled by the following parameters:

tidb_mem_quota_query,tidb_enable_tmp_storage_on_oom,tmp-storage-path, andtmp-storage-quota. - When the disk spill is triggered, TiDB outputs a log containing the keywords

memory exceeds quota, spill to disk nowormemory exceeds quota, set aggregate mode to spill-mode. - Disk spill for the Sort, MergeJoin, and HashJoin operators is introduced in v4.0.0. Disk spill for the non-parallel algorithm of the HashAgg operator is introduced in v5.2.0. Disk spill for the parallel algorithm of the HashAgg operator is introduced in v8.0.0 as an experimental feature and becomes generally available (GA) in v8.2.0. Disk spill for the TopN operator is introduced in v8.3.0.

- You can control whether to enable the parallel HashAgg algorithm that supports disk spill using the

tidb_enable_parallel_hashagg_spillsystem variable. This variable will be deprecated in a future release. - When the SQL executions containing Sort, MergeJoin, HashJoin, HashAgg, or TopN cause OOM, TiDB triggers disk spill by default.

The following example uses a memory-consuming SQL statement to demonstrate the disk spill feature for HashAgg:

Configure the memory quota of a SQL statement to 1GB (1 GB by default):

SET tidb_mem_quota_query = 1 << 30;Create a single table

CREATE TABLE t(a int);and insert 256 rows of different data.Execute the following SQL statement:

[tidb]> explain analyze select /*+ HASH_AGG() */ count(*) from t t1 join t t2 join t t3 group by t1.a, t2.a, t3.a;Because executing this SQL statement occupies too much memory, the following "Out of Memory Quota" error message is returned:

ERROR 1105 (HY000): Out Of Memory Quota![conn_id=3]Execute the same SQL statement. You can find that this time, the statement is successfully executed and no error message is returned. From the following detailed execution plan, you can see that HashAgg has used 600 MB of hard disk space.

[tidb]> explain analyze select /*+ HASH_AGG() */ count(*) from t t1 join t t2 join t t3 group by t1.a, t2.a, t3.a;+---------------------------------+-------------+----------+-----------+---------------+---------------------------------------------------------------------------------------------------------------------------------------------------------------------+-----------------------------------------------------------------+-----------+----------+ | id | estRows | actRows | task | access object | execution info | operator info | memory | disk | +---------------------------------+-------------+----------+-----------+---------------+---------------------------------------------------------------------------------------------------------------------------------------------------------------------+-----------------------------------------------------------------+-----------+----------+ | HashAgg_11 | 204.80 | 16777216 | root | | time:1m37.4s, loops:16385 | group by:test.t.a, test.t.a, test.t.a, funcs:count(1)->Column#7 | 1.13 GB | 600.0 MB | | └─HashJoin_12 | 16777216.00 | 16777216 | root | | time:21.5s, loops:16385, build_hash_table:{total:267.2µs, fetch:228.9µs, build:38.2µs}, probe:{concurrency:1, total:35s, max:35s, probe:35s, fetch:962.2µs} | CARTESIAN inner join | 8.23 KB | 4 KB | | ├─TableReader_21(Build) | 256.00 | 256 | root | | time:87.2µs, loops:2, cop_task: {num: 1, max: 150µs, proc_keys: 0, rpc_num: 1, rpc_time: 145.1µs, copr_cache_hit_ratio: 0.00} | data:TableFullScan_20 | 885 Bytes | N/A | | │ └─TableFullScan_20 | 256.00 | 256 | cop[tikv] | table:t3 | tikv_task:{time:23.2µs, loops:256} | keep order:false, stats:pseudo | N/A | N/A | | └─HashJoin_14(Probe) | 65536.00 | 65536 | root | | time:728.1µs, loops:65, build_hash_table:{total:307.5µs, fetch:277.6µs, build:29.9µs}, probe:{concurrency:1, total:34.3s, max:34.3s, probe:34.3s, fetch:278µs} | CARTESIAN inner join | 8.23 KB | 4 KB | | ├─TableReader_19(Build) | 256.00 | 256 | root | | time:126.2µs, loops:2, cop_task: {num: 1, max: 308.4µs, proc_keys: 0, rpc_num: 1, rpc_time: 295.3µs, copr_cache_hit_ratio: 0.00} | data:TableFullScan_18 | 885 Bytes | N/A | | │ └─TableFullScan_18 | 256.00 | 256 | cop[tikv] | table:t2 | tikv_task:{time:79.2µs, loops:256} | keep order:false, stats:pseudo | N/A | N/A | | └─TableReader_17(Probe) | 256.00 | 256 | root | | time:211.1µs, loops:2, cop_task: {num: 1, max: 295.5µs, proc_keys: 0, rpc_num: 1, rpc_time: 279.7µs, copr_cache_hit_ratio: 0.00} | data:TableFullScan_16 | 885 Bytes | N/A | | └─TableFullScan_16 | 256.00 | 256 | cop[tikv] | table:t1 | tikv_task:{time:71.4µs, loops:256} | keep order:false, stats:pseudo | N/A | N/A | +---------------------------------+-------------+----------+-----------+---------------+---------------------------------------------------------------------------------------------------------------------------------------------------------------------+-----------------------------------------------------------------+-----------+----------+ 9 rows in set (1 min 37.428 sec)

Others

Mitigate OOM issues by configuring GOMEMLIMIT

GO 1.19 introduces an environment variable GOMEMLIMIT to set the memory limit that triggers GC.

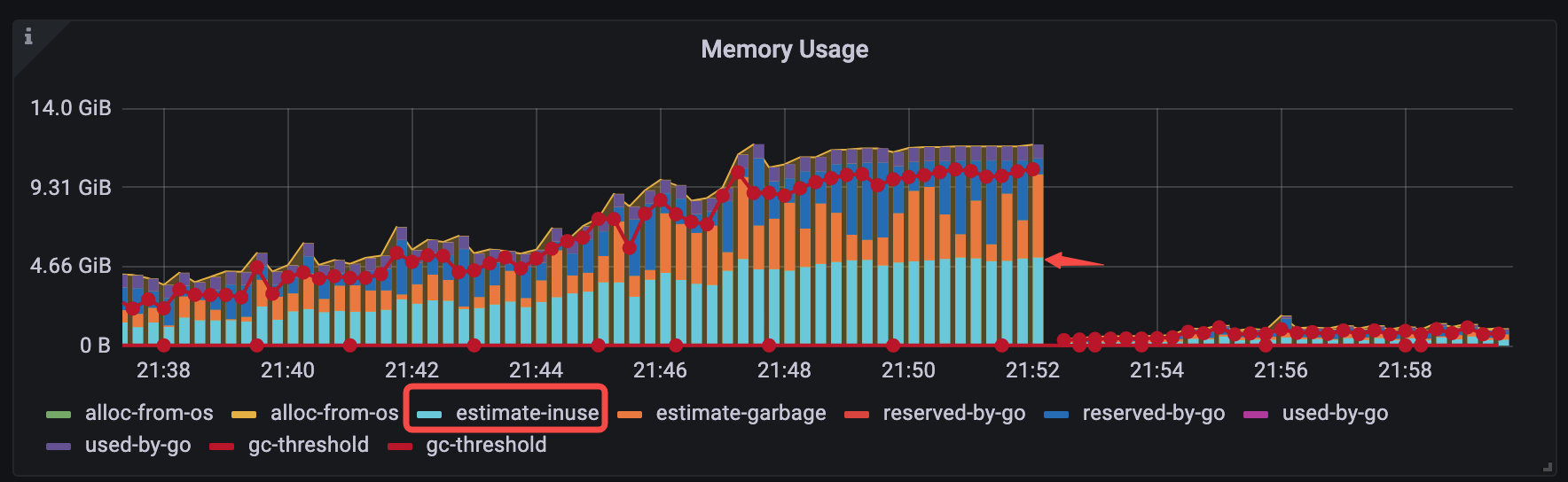

For v6.1.3 <= TiDB < v6.5.0, you can mitigate a typical category of OOM issues by manually setting GOMEMLIMIT. The typical category of OOM issues is: before OOM occurs, the estimated memory in use on Grafana occupies only half of the entire memory (TiDB-Runtime > Memory Usage > estimate-inuse), as shown in the following figure:

To verify the performance of GOMEMLIMIT, a test is performed to compare the specific memory usage with and without GOMEMLIMIT configuration.

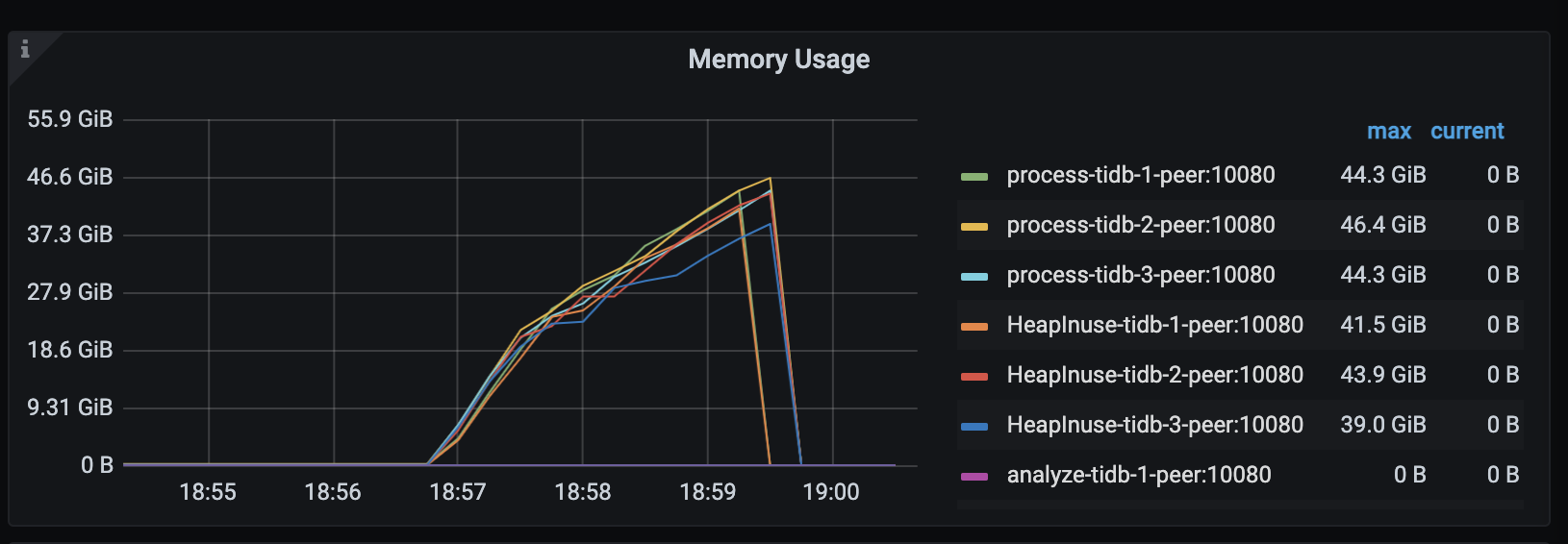

In TiDB v6.1.2, the TiDB server encounters OOM (system memory: about 48 GiB) after the simulated workload runs for several minutes:

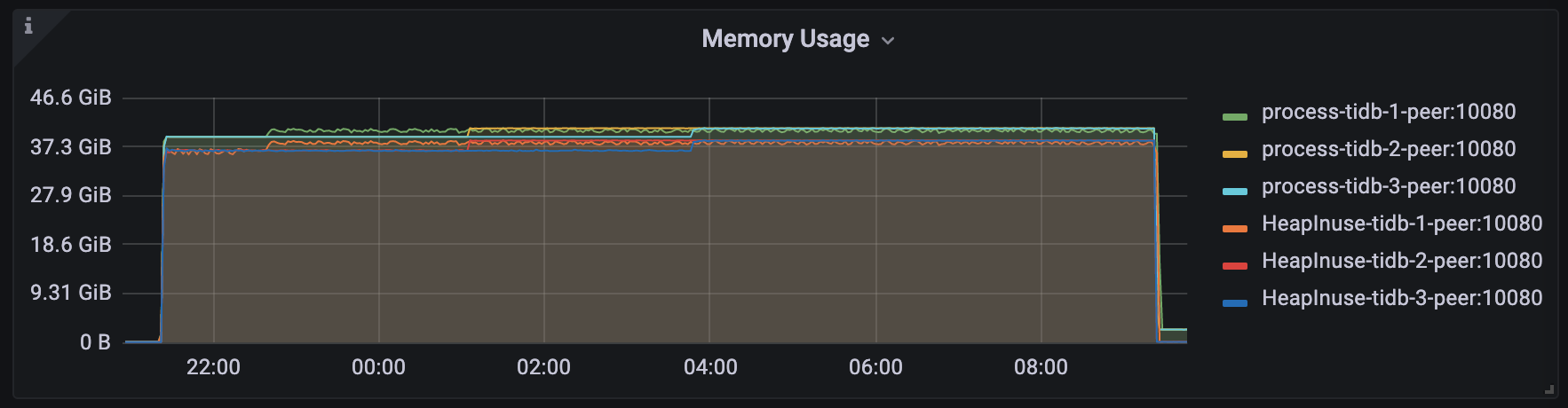

In TiDB v6.1.3,

GOMEMLIMITis set to 40000 MiB. It is found that the simulated workload runs stably for a long time, OOM does not occur in the TiDB server, and the maximum memory usage of the process is stable at around 40.8 GiB: